S3 is not just for backups

For a long time, I treated S3 as a backup location for databases. After spending the last few months thinking about cloud architecture and designing Epsilon, I slowly learned that services like S3 can be a core storage component in a cloud architecture.

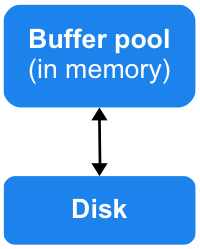

Here’s the gist of my previous thinking:

On a single instance of a database like MySQL, data are in two places: cached in the buffer pool in memory or on disk.

The disk is considered to be durable storage. For backups, depending on the strategy you use, you either end up with a single snapshot of the data or incremental pieces. Everything ends up going to S3 (or some equivalent) and stays untouched until you actually need to use the backups (maybe for disaster recovery).

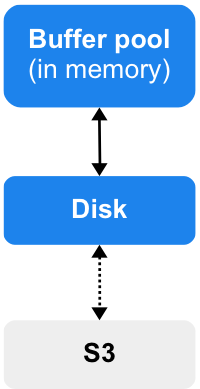

Now, I believe that S3 can play a way more active role. What you can also do is add S3 as another storage tier.

Instead of treating S3 as some continuous backup destination, you can actually treat it like the system of record, i.e. what your storage system actually is. At that point, the disk starts to look like a cache, just like the buffer pool. But unlike the buffer pool, it’s a durable cache. But does it need to be?

At VividCortex, all of our “big” data are stored in Kafka before reaching MySQL. If we had continuous backups to S3, we actually wouldn’t require MySQL to be fully durable. There would be data that are in MySQL that haven’t reached S3 yet, but those are already durable in Kafka.

In MySQL terms, a MySQL instance just holds dirty data before checkpointing to S3. If we have a crash or instance failure, we recover using the Kafka log. Just like how pages can be read into the buffer pool on-demand from the disk, you can do the same using S3.

Redshift apparently does that already:

Redshift pages data in from S3.https://t.co/C8z2aXyPoz pic.twitter.com/jFWl3wRg3j

— Preetam (@PreetamJinka) February 8, 2017

With this approach, you can scale compute independently of storage, which I think is a huge win.

If you want to learn more, I suggest watching Netflix’s talk from re:Invent 2016:

“Using Amazon S3 as the fabric of our big data ecosystem.”

YouTube: https://www.youtube.com/watch?v=o52vMQ4Ey9I

Slides: